If you were born a man,

you would sometimes want to be a woman.

So, this time,

we will use PyTorch to create an AI that feminizes the CEO in DeepFake

.

Please note that DeepFake is legally dangerous due to

portrait rights issues.

Please do not do unscrupulous business such as

pasting the face of a popular actress on an adult video.

What to prepare

- Ceo

- Female Body (Female Video)

- Google Colaboratory GPU environment (*Jupyter Notebook is also OK)

- Distribution source code (*Not required if you want to start from scratch)

Feminizing the CEO

Check the NVIDIA GPU and CUDA versions.

!nvidia-smi

!nvcc --versionInstall the DeepFake tool called sber-swap and various models.

!git clone https://github.com/sberbank-ai/sber-swap.git

%cd sber-swap

!wget -P ./arcface_model https://github.com/sberbank-ai/sber-swap/releases/download/arcface/backbone.pth

!wget -P ./arcface_model https://github.com/sberbank-ai/sber-swap/releases/download/arcface/iresnet.py

!wget -P ./insightface_func/models/antelope https://github.com/sberbank-ai/sber-swap/releases/download/antelope/glintr100.onnx

!wget -P ./insightface_func/models/antelope https://github.com/sberbank-ai/sber-swap/releases/download/antelope/scrfd_10g_bnkps.onnx

!wget -P ./weights https://github.com/sberbank-ai/sber-swap/releases/download/sber-swap-v2.0/G_unet_2blocks.pth

!wget -P ./weights https://github.com/sberbank-ai/sber-swap/releases/download/super-res/10_net_G.pthInstall the library.

!pip install mxnet-cu101mkl

!pip install onnxruntime-gpu==1.8

!pip install insightface==0.2.1

!pip install kornia==0.5.4Import.

import cv2

import torch

import time

import os

import matplotlib.pyplot as plt

from IPython.display import HTML

from base64 import b64encode

from utils.inference.image_processing import crop_face, get_final_image, show_images

from utils.inference.video_processing import read_video, get_target, get_final_video, add_audio_from_another_video, face_enhancement

from utils.inference.core import model_inference

from network. AEI_Net import AEI_Net

from coordinate_reg.image_infer import Handler

from insightface_func.i import Face_detect_crop

from arcface_model.iresnet import iresnet100

from models.pix2pix_model import Pix2PixModel

from models.config_sr import TestOptionsCreate a model.

app = Face_detect_crop(name='antelope', root='./insightface_func/models')

app.prepare(ctx_id= 0, det_thresh=0.6, det_size=(640,640))

G = AEI_Net(backbone='unet', num_blocks=2, c_id=512)

G.eval()

G.load_state_dict(torch.load('weights/G_unet_2blocks.pth', map_location=torch.device('cpu')))

G = G.cuda()

G = G.half()

netArc = iresnet100(fp16=False)

netArc.load_state_dict(torch.load('arcface_model/backbone.pth'))

netArc=netArc.cuda()

netArc.eval()

handler = Handler('./coordinate_reg/model/2d106det', 0, ctx_id=0, det_size=640)

use_sr = True

if use_sr:

os.environ['CUDA_VISIBLE_DEVICES'] = '0'

torch.backends.cudnn.benchmark = True

opt = TestOptions()

model = Pix2PixModel(opt)

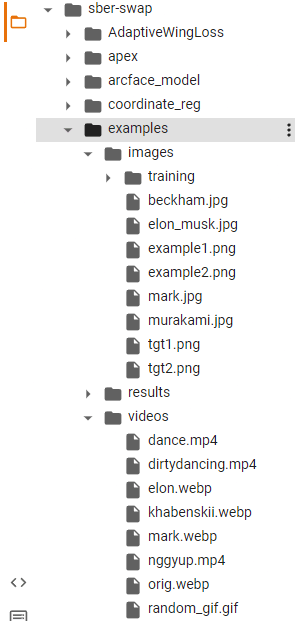

model.netG.train()Upload images and videos.

This time, the target_type (completed form) is made into a video.

| Variable name | Path |

|---|---|

| source_path | CEO image path |

| target_path | Female image path (when performing image conversion) |

| path_to_video | Female video path |

Images and videos are stored here in Google Colab.

By the way,

you can download free images and videos from pixabay and others.

target_type = 'video'

source_path = 'examples/images/jin.png'

target_path = 'examples/images/woman.png'

path_to_video = 'examples/videos/woman.mp4'

source_full = cv2.imread(source_path)

OUT_VIDEO_NAME = "examples/results/result.mp4"

crop_size = 224

try:

source = crop_face(source_full, app, crop_size)[0]

source = [source[:, :, ::-1]]

print("Everything is ok!")

except TypeError:

print("Bad source images")

if target_type == 'image':

target_full = cv2.imread(target_path)

full_frames = [target_full]

else:

full_frames, fps = read_video(path_to_video)

target = get_target(full_frames, app, crop_size)Let's infer the model.

batch_size = 40

START_TIME = time.time()

final_frames_list, crop_frames_list, full_frames, tfm_array_list = model_inference(

full_frames,

source,

target,

netArc,

G,

.app

set_target = False,

crop_size=crop_size,

BS=batch_size

)

if use_sr:

final_frames_list = face_enhancement(final_frames_list, model)

if target_type == 'video':

get_final_video(

final_frames_list,

crop_frames_list,

full_frames,

tfm_array_list,

OUT_VIDEO_NAME,

fps,

handler

)

add_audio_from_another_video(path_to_video, OUT_VIDEO_NAME, "audio")

print(f'Full pipeline took {time.time() - START_TIME}')

print(f"Video saved with path {OUT_VIDEO_NAME}")

else:

result = get_final_image(

final_frames_list,

crop_frames_list,

full_frames[0],

tfm_array_list,

handler

)

cv2.imwrite('examples/results/result.png', result)Let's show it.

if target_type == 'image':

show_images(

[

source[0][:,:,::-1],

target_full,

result

],

[

'Source Image',

'Target Image',

'Swapped Image'

],

figsize=(20, 15)

)

else:

video_file = open(OUT_VIDEO_NAME, "r+b").read()

video_url = f"data:video/mp4;base64,{b64encode(video_file).decode()}"

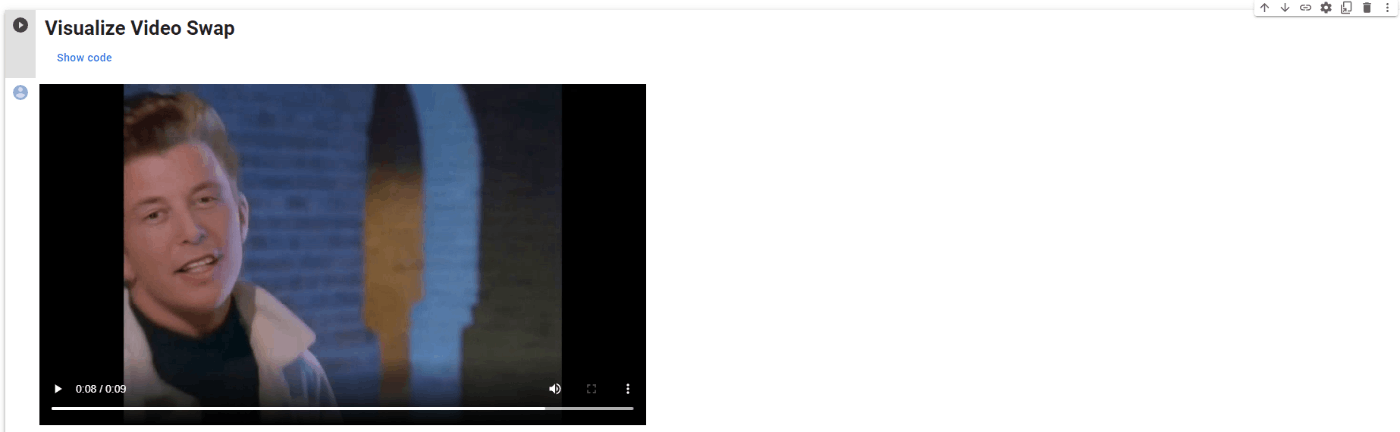

HTML(f"""<video width={800} controls><source src="{video_url}"></video>""")The previous video was output.

The output looks like this: (*See sber-swap official)

Conclusion

Disgusting.

Click here for DeepTech's magazine "DeepMagazine"